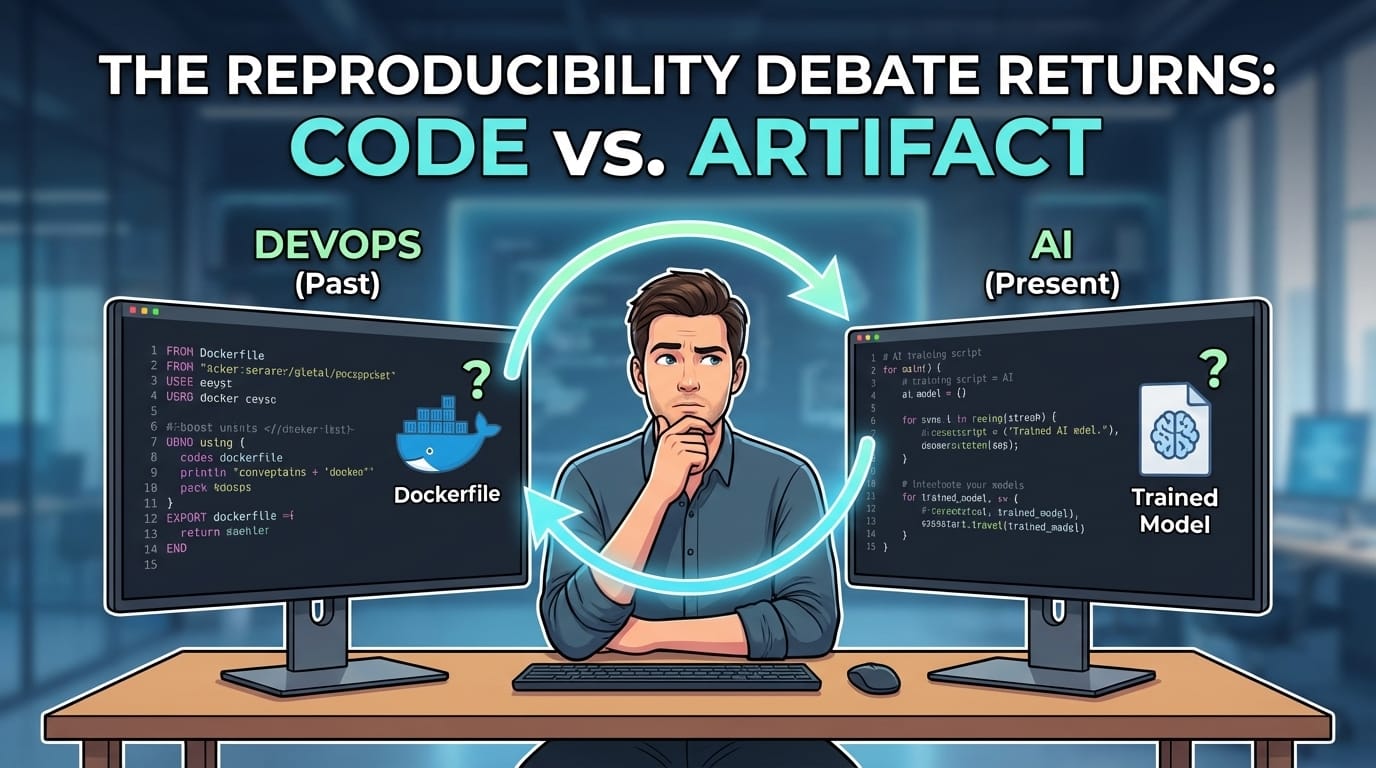

The DevOps Debate We Had Six Years Ago — That I’m Now Watching Happen Again in AI

About six years ago I was in the middle of a surprisingly heated debate on a team. The question sounded simple:

If something breaks in production, what should you keep so you can reproduce the issue? Specifically, we were talking about containers. Should we keep the Dockerfile and Helm charts that built the container? Or should we keep the actual container image that ran in production? What made the discussion interesting is that the room didn’t split into two camps. It split into three.

Argument #1: “The Dockerfile Is Enough”

The first group argued that the Dockerfile was the source of truth. If something broke, we could always rebuild the container from the instructions. That’s the whole point of Infrastructure as Code, right? The logic looked like this:

FROM ubuntu:latest

RUN apt-get update && apt-get install -y python3Why store artifacts if the instructions already exist?

At the time, that felt very aligned with early DevOps thinking.

Argument #2: “Pin Every Version”

The counterargument came quickly. The real problem wasn’t Dockerfiles — it was floating dependencies. So the solution was simple: Pin everything.

FROM ubuntu:22.04

RUN apt-get install -y python3=3.10.6If every dependency had a specific version, rebuilding the container later should produce the same result.

And to be fair, this dramatically improves reproducibility. But it still doesn’t fully solve the problem.

Argument #3: “Mirror Everything Internally”

Then someone raised an even stronger approach. Instead of relying on public package repositories and registries, mirror everything internally. Internal base images, Internal package repositories, Controlled dependency sources.

Now the Dockerfile might look like:

FROM registry.internal/ubuntu:22.04

RUN apt-get install -y python3=3.10.6At that point it felt like we had closed every gap.

Pinned dependencies | Controlled repositories | Deterministic builds

Problem solved. Or so we thought.

The Subtle Problem

Over time, teams started noticing something important: Rebuilding a container is not the same thing as reproducing a container. Even with pinned dependencies and internal mirrors, rebuilding still produces a new artifact. And when production systems fail, engineers eventually learn something the hard way: You don’t want something that is probably equivalent.

You want the exact thing that ran. Where the Industry Landed. Over time the industry converged on a simpler model. Instead of rebuilding containers to debug them, teams started keeping the container image itself. Modern deployment pipelines now look something like this:

Build → Push Image → Deploy by Digest

Instead of deploying tags like:

myapp:latest

Teams deploy immutable references like:

myapp@sha256:9f3c1c...

That digest represents the exact image that was built. Pull it later, and you get the exact same bits. Which makes debugging production dramatically simpler.

The Shift in Thinking

Looking back, the debate wasn’t really about Docker. It was about a deeper engineering principle:

Instructions describe intent.

Artifacts capture reality.

A Dockerfile describes how you intended to build a system. A container image shows what actually ran. Modern engineering practices increasingly reflect that distinction.

We now treat artifacts as first-class citizens:

• container image digests

• dependency lockfiles

• build artifacts

• infrastructure state

• software bills of materials (SBOMs)

All for the same reason: determinism.

And Now I’m Watching the Same Debate Happen Again. Interestingly, I’m starting to see the same discussion happen again in AI systems. Except the question now sounds like this:

When an AI system produces an answer, what should you keep?

The prompt that was sent to the model?

Or the actual response that was generated?

At first glance the prompt seems like enough. But anyone who has used ChatGPT has seen the problem. Ask the same prompt twice and you can get two different answers. Because behind the scenes, a lot can change:

• the model version

• system prompts

• temperature settings

• retrieval context

• tools available to the model

Which means the same prompt later may produce a completely different result. Sound familiar?

It’s the exact same lesson we learned with containers. Engineering Keeps Learning the Same Lesson

If you zoom out, this pattern keeps repeating.

Every time systems become complex enough, teams rediscover the same truth: Instructions are not enough to reproduce reality. Artifacts capture what actually happened. The Principle I’ve Come to Trust. Whenever something runs in production, the safest thing to keep is: the artifact that actually ran.

Because instructions tell you what you planned to build.

Artifacts tell you what you actually shipped.